Forget the Output. Watch What Your Agent Reads Before It Writes.

234,760 tool calls revealed a 70% drop in read-to-edit ratio weeks before anyone noticed output quality declining. Behavioral fingerprints catch what output checks miss.

System architecture, performance, security, and the engineering decisions that matter at scale.

234,760 tool calls revealed a 70% drop in read-to-edit ratio weeks before anyone noticed output quality declining. Behavioral fingerprints catch what output checks miss.

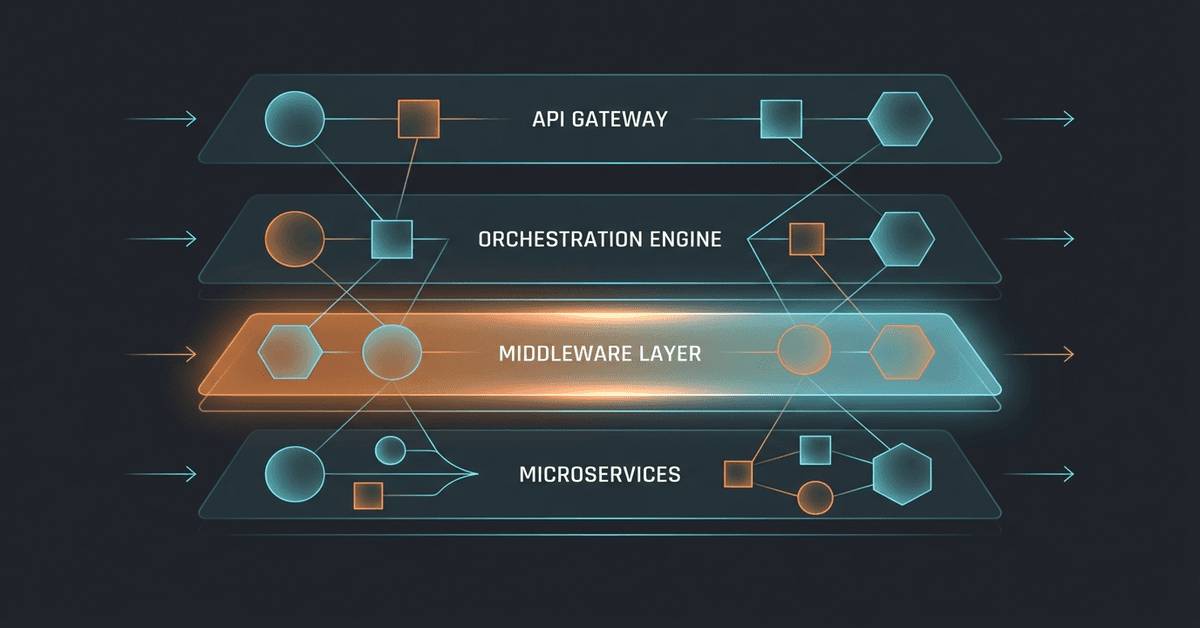

AI workloads punish the exact things microservices add: network hops, duplicated caches, serialization overhead. The monolith argument isn't about simplicity anymore. It's about money.

Three waves of productivity metrics. Story points, DORA, AI output. Each one optimized for a proxy until it broke. Each one made senior engineers less visible.

chardet has 130 million monthly downloads. A developer used Claude to rewrite it in five days and switched the license from LGPL to MIT. The original author came back after 15 years to object. I read the issue and realized I do this every day.

Everyone says AI makes coding faster. Nobody publishes the actual hours. My planning-to-execution ratio was 6.4:1. That ratio is why the overnight build worked.

Overnight autonomous agents are real, productive, and running on a flag with 'dangerously' in the name. Nobody has solved the trust problem. We've agreed to skip it.

Vercel Workflows ships crash recovery, step isolation, and durable state for agents. My pipeline uses BullMQ on a $7 VPS instead. Here's the trade-off.

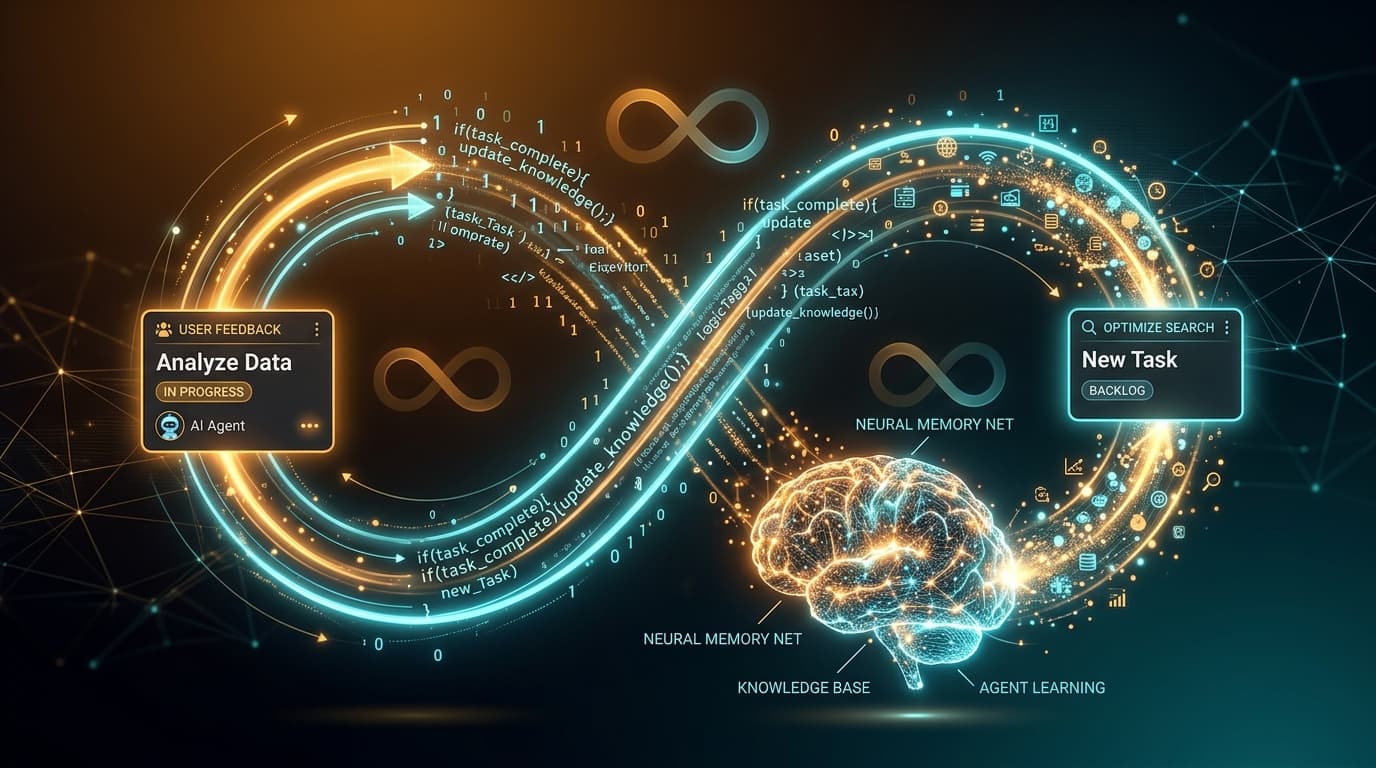

Anthropic launched persistent memory for Claude Managed Agents. I've been building my own memory engine for months. Here's what their version solves, what it doesn't, and why the hard half of agent memory isn't storage.

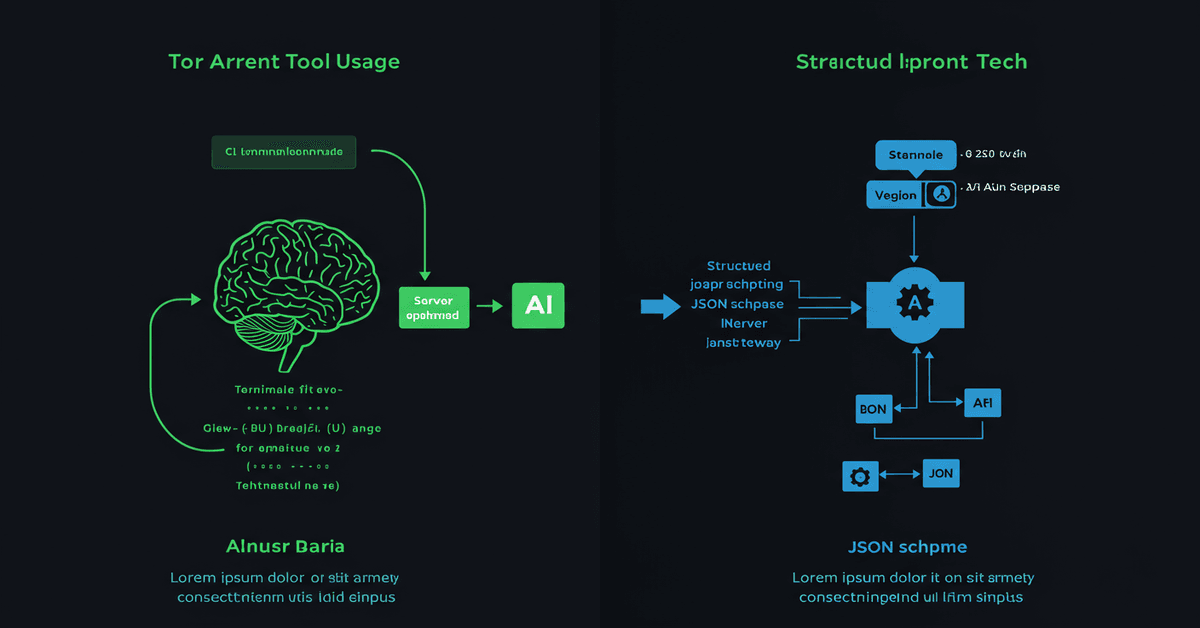

I've been doing context engineering for months without calling it that. A 547-line CLAUDE.md, subagent isolation, strategic compaction, six MCP servers. The term just caught up to the practice.

78% of AI failures are invisible. But the research only covers wrong outputs. The failure mode nobody's monitoring for is correct output at the wrong time, in the wrong scope, for the wrong reason.

GPT-5.4 scores 0.395 on edit faithfulness while Claude Opus 4.6 scores 0.060. Over-editing isn't a bug. It's a training incentive. Models are rewarded for solving the task, not for touching the least code.

SpaceX secured the right to acquire Cursor for $60B. That's more than GitHub, Slack, and Figma combined. But the real story isn't the price tag. It's what happens when your coding tool runs Grok instead of Claude.

The METR study found experienced developers are 19% slower with AI tools while believing they're 20% faster. I've lived that 39-point perception gap. Here are three failures that proved it.

GitHub's Rubber Duck ships GPT-5.4 as a reviewer for Claude Sonnet's code. The cross-model pattern is real, backed by ICLR 2026 research. But 'second opinion' is the wrong frame. The hardest agent failures need structured verification, not another model guessing.

Opus 4.7 shipped Tuesday. It removed temperature, killed budget_tokens, changed the tokenizer by 35%, and shifted how agents spawn subprocesses. My pipeline didn't break. I also didn't test for it. Neither did you.

Entity resolution errors compound exponentially. Graph decay runs 15-20% per quarter. Gradient Flow says they barely know of any production deployments offering real business value. The most hyped retrieval pattern of 2026 has a production problem nobody wants to own.

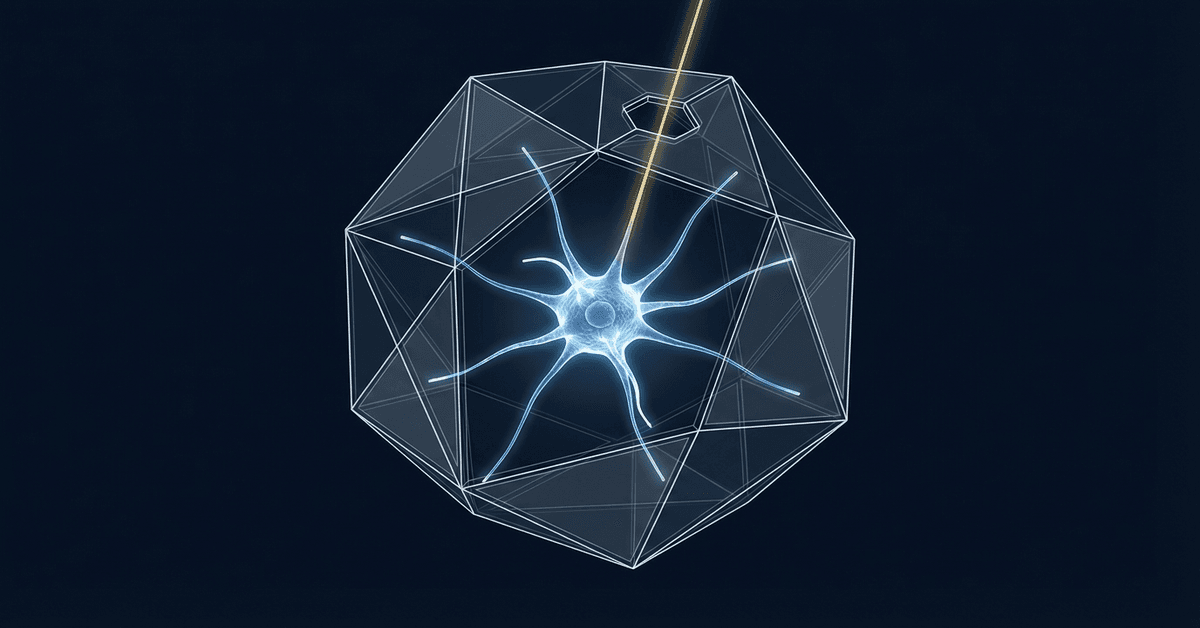

Your AI code reviewer gives the same feedback your team rejected three weeks ago. It can't know. I'm building the fix: two graphs, one structural and one cognitive, wired together through spreading activation.

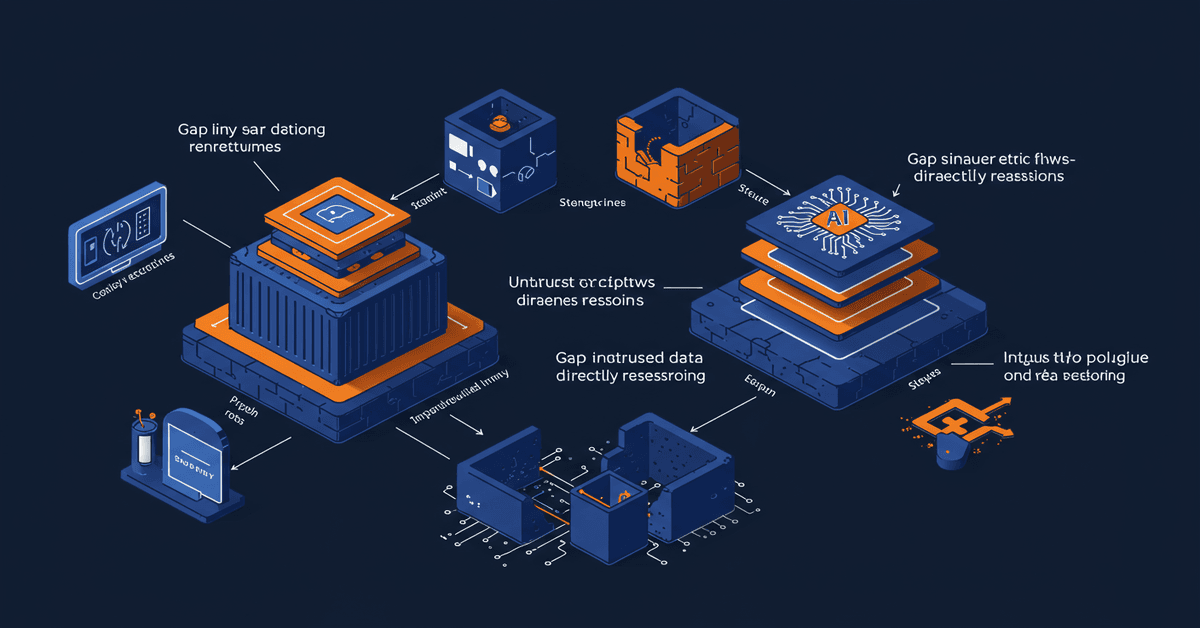

The most dangerous AI agent in your org isn't the one leadership is planning to deploy. It's the one a developer shipped last quarter with operator-level permissions and no review process.

My CLAUDE.md said 'NEVER publish without internal links.' The agent published with zero. The fix wasn't better rules. It was structural enforcement: eval harnesses, separate verifiers, and hooks that don't ask permission.

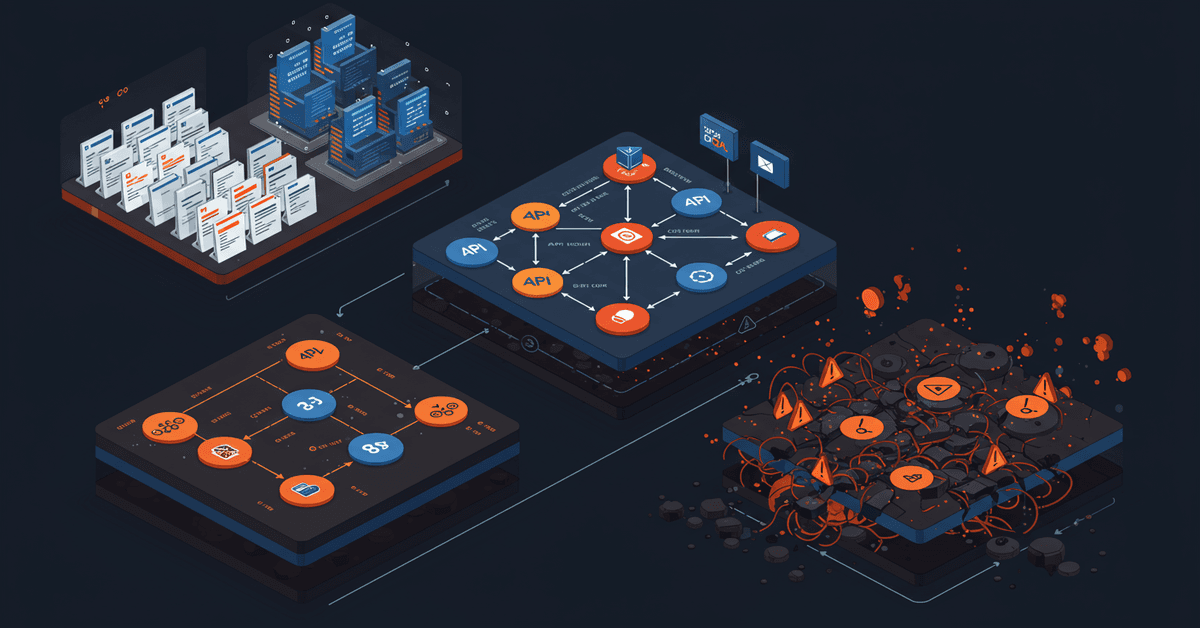

I built a multi-agent pipeline with BullMQ, hit every distributed systems failure in the book, and learned most tasks don't need multi-agent.

I set up end-to-end encryption to protect my Obsidian notes from everyone. Then I wrote 814 lines of TypeScript to decrypt them on the server and pipe them into an AI memory engine. I am the threat model I was protecting against.

The 10x speed promise is real for VS Code-scale projects. For my 7-package monorepo it's more like 3x. And nobody's talking about what we lose: the plugin API that Angular, Vue, and hundreds of tools depend on has no replacement timeline.

MCP won the standard war. But running six servers in production every day exposes failure modes no demo will show you: context bloat, silent auth failures, and tool selection that falls apart at scale.

The Pragmatic Engineer's 2026 survey says Claude Code is the most loved AI dev tool at 46%. Cursor sits at 19%. Copilot at 9%. I switched six months ago. The terminal won, and it wasn't even close.

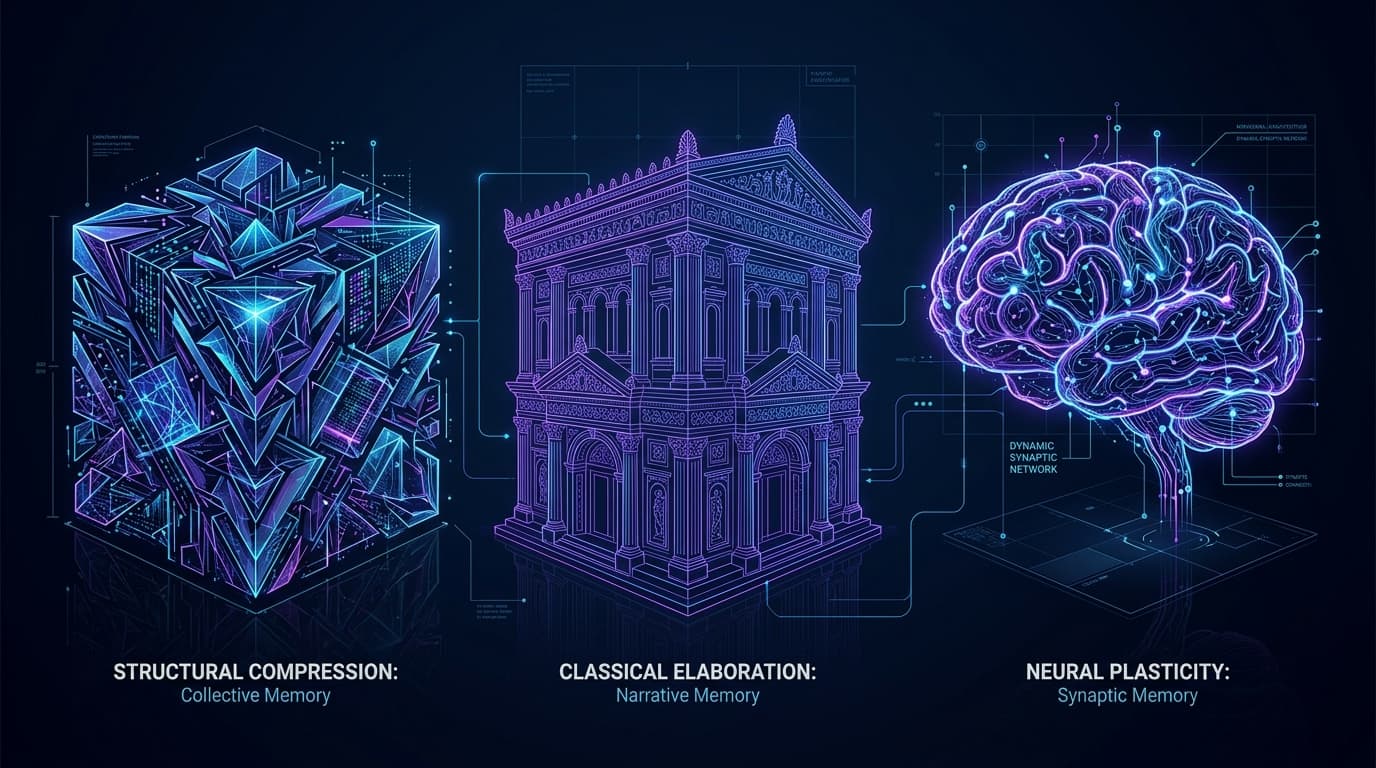

SimpleMem compresses conversations into atoms. MemPalace stores every word in a spatial hierarchy. Engram forgets on purpose. After a week with all three, I think they're solving different problems.

My AI agent applied seven patches to the same bug. Each one a fresh attempt. No accumulation. After connecting dispatch with memory, the same class of bug gets caught on the first try.

My AI agent wrote a perfect architecture spec for dual-storage memory. Then it ignored the whole thing and built a flat table. Seven patches later, I threw it all away and built Engram from neuroscience papers instead.

Two of my AI agents opened conflicting PRs on the same repo. I caught it at 11 PM. That's when I realized the control plane was already sitting on my screen: the kanban board.

The viral growth of oh-my-codex isn't about Codex. It's about who owns the orchestration layer. While Anthropic locks down, the community is building the real platform.

A former Azure Core engineer just published the most detailed infrastructure failure account since the Knight Capital postmortem. 173 unowned agents, 500K monthly crashes, and a trillion dollars gone.

512,000 lines of TypeScript leaked from a missing .npmignore. The architecture I reverse-engineered was spot-on. The implementation quality made me rethink what 'good code' means at $2.5B ARR.

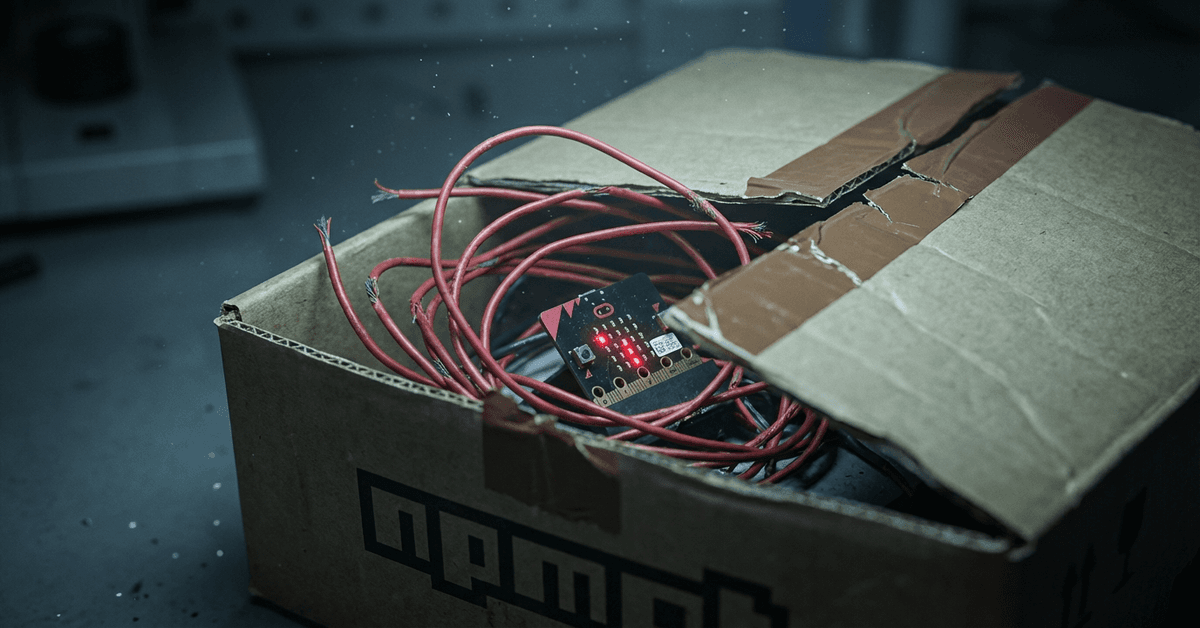

Someone hijacked an Axios maintainer's npm account and published two versions with a RAT that deletes itself after install. 50 million weekly downloads. The dropper leaves no trace. Here's exactly what happened and what to check.

I watched an AI coding agent write a perfect architecture doc, then ship 15 patches that violated every decision in it. When I confronted it, the explanation was more honest than anything I've read about RLHF.

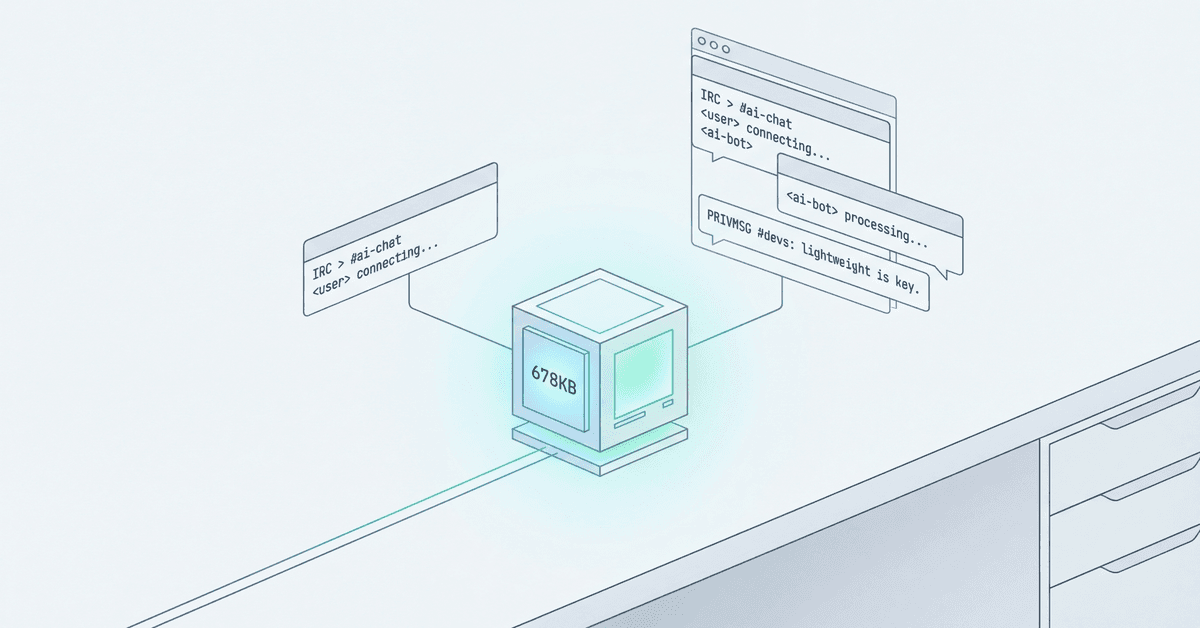

George Larson put an AI agent on a $7/month VPS. It handles real traffic with a 678 KB binary and 1 MB of RAM. The 'AI needs scale' narrative just broke.

AutoResearch got 56,000 stars for the wrong reason. Everyone focused on the AI agent. The real engineering is the four constraints that make unsupervised velocity safe.

DHH rewrote the Rails homepage to target AI agents instead of developers. The data says he's onto something, even if Rails isn't the most token-efficient framework in benchmarks.

A 397B parameter model running on 12GB of RAM. The trick isn't new ML theory. It's demand paging, the same architecture pattern we've used since the 1960s.

Cursor's PR velocity went up 5x. DryRun says 87% of AI PRs have vulnerabilities. The solution? More agents. Four autonomous security agents review every PR, scan for forgotten vulns, and auto-patch dependencies. Templates are public. The meta-layer is here.

Next.js 16.2 shipped AGENTS.md by default, bundled docs in node_modules, browser logs piped to terminal, and a CLI that gives agents DevTools via shell commands. Vercel isn't improving DX. They're building for a new user: the coding agent.

A software engineer's window into Eid al-Fitr. Not a lecture on Islam. Just what tonight and tomorrow actually look like from the inside.

Ollama 0.18 shipped with native OpenClaw integration. Local models now get tool calling, multi-agent workflows, and permission boundaries. No API costs, no data leaving your network.

Amazon.com went down for six hours because of AI-assisted code changes. A week later, they required senior engineer sign-offs. LinearB analyzed 8.1 million pull requests and found AI code waits 4.6x longer for review and ships 19% slower. The productivity gains were a mirage.

I picked DynamoDB through Amplify for a professional networking app with swipeable cards. The first two weeks were magic. Then we needed queries DynamoDB was never built to answer.

Everyone's arguing whether AI agents should use MCP or CLI tools. The answer depends on a question nobody's asking: does the model already know how to use the tool, or did your team build it last Tuesday?

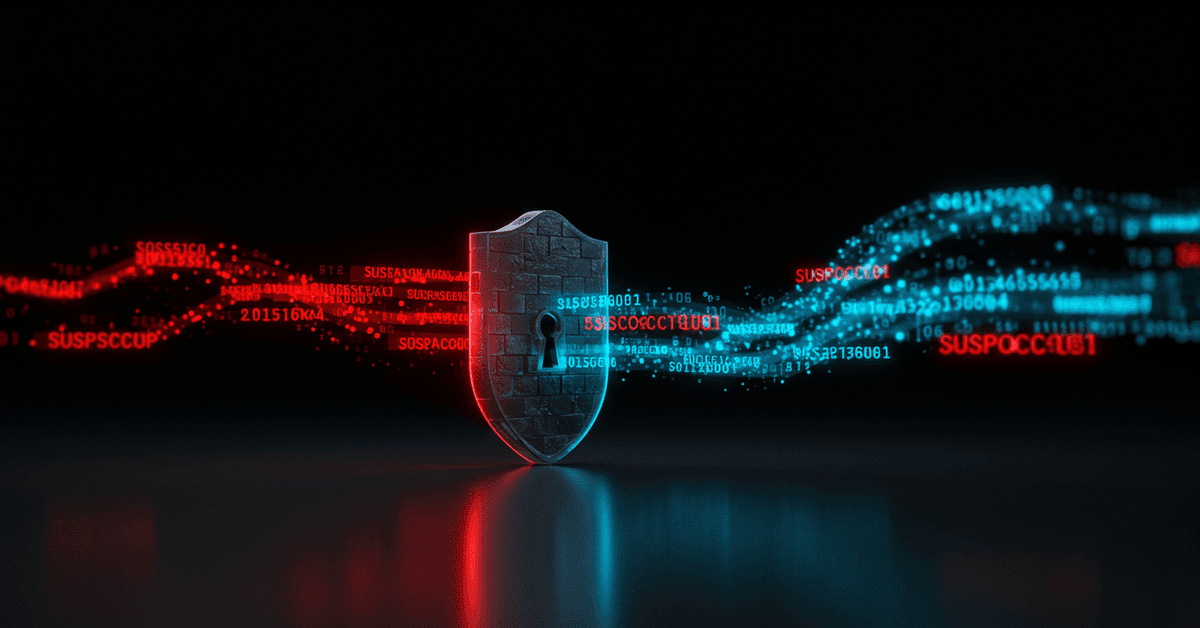

GitHub published the most sophisticated platform security for AI agents I've seen. Isolation, token quarantine, constrained outputs, audit trails. It doesn't stop the attacks that actually happened this month.

MCP servers have no input sanitization layer. Every JSON-RPC request flows straight from AI client to tool server, unfiltered. So I built one.

An autonomous AI agent breached McKinsey's internal AI platform in two hours. No credentials. No insider access. The entry point was SQL injection through JSON field names, a bug class older than most junior developers.

Someone put a prompt injection payload in a GitHub issue title. An AI triage bot executed it, poisoned the build cache, stole npm credentials, and pushed a rogue package to 4,000 developers. The full chain took five steps.

Agent skills became an open standard. MCP connects everything. But the layer between them, the one that keeps agents from failing catastrophically in production, barely exists.

A canonical URL mismatch between www and non-www kept my entire blog invisible to Google for three months. Six files, twelve line changes, and a sitemap resubmission fixed it. Here's how to check yours.

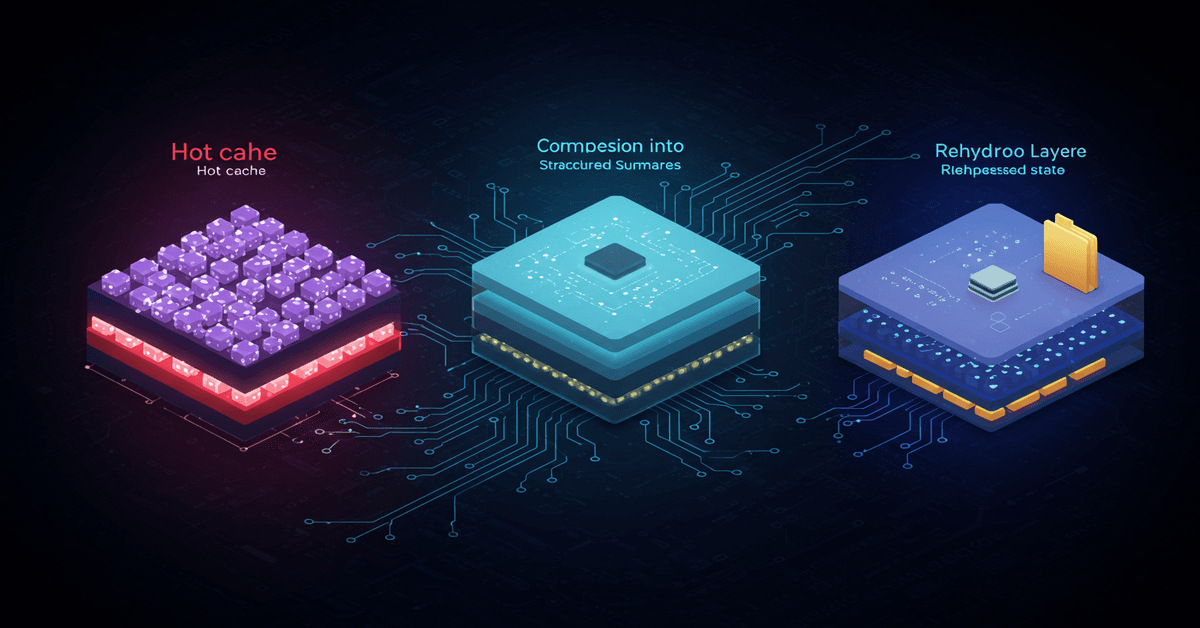

Claude Code manages your context through three systems: microcompaction, auto-compaction, and structured rehydration. Here's how the machinery actually works, and why most developers burn tokens without realizing it.

AI accelerates code production but expands scope, raises expectations, and shifts the bottleneck from implementation to judgment. Engineers are doing 2x the work and feeling 10x the burnout.

60,000 GitHub stars. One billion Docker pulls. Officially archived. MinIO's five-year wind-down from Apache 2.0 to AGPL to dead is the most dramatic open-source infrastructure collapse in years. Here's the migration playbook.

MCP servers are everywhere. Production-ready ones aren't. Here's the architecture I use after running MCP in real workloads: error boundaries, state isolation, security hardening, and scaling patterns that actually hold up.

Google told developers API keys aren't secrets. Then Gemini changed the rules. Truffle Security found 2,863 live keys on public websites that now access private Gemini endpoints, including keys belonging to Google itself. The attack is a single curl command.

Amplifying.ai ran 2,430 prompts against Claude Code and found it builds custom solutions in 12 of 20 categories. The tools it picks are becoming the default stack for a growing share of new projects.

One engineer, $1,100 in tokens, and 94% API coverage. Vinext is either the future of framework development or the most impressive demo that will never matter. I think it's both.

3.9 million requests across Java, Go, Node.js, and Python. Go wins on memory, Java on latency. But after running MCP servers in production for months, I think the benchmark misses what actually matters.

Boris Cherny says the software engineer title disappears in 2026. He's wrong about the title, right about the shift. Here's what 9 years of production engineering taught me about surviving it.

Cloudflare's Durable Objects give you single-threaded, globally unique compute with embedded SQLite. AWS has no equivalent. Here's how they change backend architecture.

AWS Lambda Durable Functions look like Step Functions killers. They're not. Here's when each one wins, what the checkpoint-and-replay model actually costs, and the architectural patterns I'd use in production.

Firefox just shipped setHTML in version 148, replacing the notorious innerHTML with something that actually sanitizes by default. Here's why this matters and what it means for your security posture.

When an independent browser engine switches from C++ to Rust mid-flight, it's not just a language choice. It's a bet on maintenance burden, contributor velocity, and long-term survival.

The best AI model finds 49% of backdoors in compiled binaries. With a 22% false positive rate. Here's what that means for your supply chain security strategy.

Most developers are using AI assistants inefficiently. Here's how separating planning from execution can 10x your productivity.

GGML.ai joined Hugging Face this week, creating a complete stack for running AI locally. The assumption that AI requires the cloud is already obsolete—we're just waiting for everyone to notice.

Dependabot opened thousands of PRs for a vulnerability that affected nobody. The real fix isn't more automation - it's smarter automation.

A startup just hit 17K tokens/sec on a single chip by hard-wiring Llama into silicon. The GPU monoculture in AI inference has an expiration date.

Every infrastructure decision is a bet on the future. After watching teams make the same mistakes across multiple startups, here's what actually matters when choosing your stack.

The Pentagon wants AI labs to allow 'all lawful use' of their models. Anthropic pushed back. Now the DoD is threatening to blacklist them. Here's why engineers should care.

Google's Gemini 3.1 Pro just dropped with a 77% on ARC-AGI-2 - up from 31%. The benchmarks are staggering. But the people actually building with it keep saying the same thing: it can't call tools.

Claude Sonnet 4.6 just dropped and developers with early access prefer it over Opus 4.5. This isn't an accident. It's a pattern that should change how you pick models.

There's a new name for something engineers have been feeling for a year: token anxiety. The compulsive urge to always be prompting, always shipping. This is what that actually is.

Anthropic collapsed Claude Code's file output in v2.1.20. Devs pushed back immediately — and they were right. This isn't a UX preference. It's about catching AI mistakes before they cost you.

Apple's App Store got 557,000 new submissions last year, up 24%. Building an app went from a $50K project to a weekend. When development costs disappear, subscription pricing follows. The businesses that survive know exactly why.

OpenAI's GPT-5.2 conjectured a new formula in theoretical physics that humans missed for decades. A concrete data point on where AI reasoning actually stands.

Google and OpenAI both shipped major AI releases this week — one betting on deeper reasoning, one on faster inference. These aren't just product launches. They're two different theories about where the real bottleneck is.

Everyone's arguing GPT-5 vs Opus while the real bottleneck in LLM coding agents is something nobody talks about: the edit tool format.

287 Chrome extensions with 37.4 million installs are quietly exfiltrating browsing history to data brokers. Here's what was found, who's behind it, and what you can do about it.

Anthropic's AI writes nearly 100% of their code, but Microsoft research shows devs miss 40% more bugs reviewing AI code. The essential skill of 2026 is code cynicism.

The cost of testing an idea has dropped to zero. In 2026, we don't build MVPs to test tech feasibility anymore. We build them to test market feasibility.

A look at the shift from brute-force AI to bio-inspired efficiency and quantum computing breakthroughs.

Learn the key principles and patterns I've used to build Next.js applications that scale to millions of users, with insights from real-world production systems.

A technical deep dive into the stack used for this portfolio. Highlighting React Server Components, Tailwind's new engine, and performance benefits.

Architecture, performance, security. No spam.