I Closed the AI Agent Loop. They Stopped Making the Same Mistakes.

My AI agent applied seven patches to the same bug. Each one a fresh attempt. No accumulation. After connecting dispatch with memory, the same class of bug gets caught on the first try.

Three weeks ago, my AI coding agent applied seven sequential patches to fix a search system. Same class of bug. Seven times. Each patch addressed a symptom. None touched the root cause.

I watched it happen. The agent wrote a correct architecture document recommending dual-storage. Then it ignored its own document and built a flat table. When I pointed out the search was broken, it added a content filter. When that didn't work, a scoring adjustment. Then a threshold tweak. Then query expansion. Then a fallback algorithm. Then role-based scoring. Seven bandaids on a broken bone.

The agent had no memory of the first patch when it applied the seventh. Each fix was a fresh attempt by what was effectively a new person walking into the same broken system. No accumulation. No learning. Just an expensive, articulate amnesiac making the same mistake with slightly different syntax each time.

Today, that class of bug gets caught on the first try. Not because I wrote a rule. Because the agent remembers what happened last time.

Productive is not the same as learning

Here's the workflow most teams run with AI coding agents. Describe a task. The agent reads the codebase. It writes code. You review the PR. Next task.

Every task starts from zero.

The agent doesn't know it debugged a race condition in this repo last week. Doesn't know the team prefers explicit error handling over try-catch blocks. Doesn't know that the CI pipeline silently skips tests that don't match a specific naming convention. All of that knowledge existed in a context window that no longer exists.

Context windows are not memory. They're scratch space. When the session ends, the knowledge is gone. The next session is a stranger walking into the same office, sitting at the same desk, with none of the institutional knowledge of the person who sat there yesterday.

I was running three agents dispatched through Ouija, my kanban-to-agent pipeline engine. They were productive. PRs opened, cards advanced, Telegram notifications pinged. But card number 50 was no smarter than card number 1. I had built a dispatch system. I hadn't built a learning system. The difference matters more than I expected.

One config change

The fix was connecting two systems I'd already built separately.

Ouija handles dispatch: card assigned on the kanban board, webhook fires, guards evaluate, agent clones the repo and writes code, PR opens, card advances. The control plane.

Engram handles memory: five cognitive systems (episodic, semantic, procedural, sensory, associative), consolidation cycles that compress raw conversations into durable knowledge, decay that prunes noise so signal gets louder over time. The brain.

Connecting them was one config change. Engram runs as an MCP server. Ouija supports a configDir per agent profile that can include MCP server configurations. Point the agent's config at a directory containing Engram's MCP setup, and every time Ouija dispatches that agent, it starts with memory tools available. It can recall past work. It can ingest new learnings. Consolidation runs between tasks.

No integration library. No shared module. No API wrapper. One config pointing at another config. MCP as the interface contract meant these two systems didn't need to know anything about each other's internals. I was suspicious when it worked on the first try, because things that feel easy are usually hiding complexity somewhere. But in this case, the architecture had already absorbed the complexity. The connection was genuinely simple.

45 minutes became 8

Before Engram, the agent encounters a failing test in the authentication module. Reads the test file. Reads the source. Tries three different approaches over 45 minutes, burning through 80,000 tokens, before eventually discovering that the mock database needs a specific seed file loaded in a setup hook.

After Engram, different task, same repo, different failing test. The agent calls memory_recall with the task context. Engram returns a procedural memory: "Tests in the auth module require the seed file at test/fixtures/auth-seed.sql to be loaded before assertions. Setup hook is in test/helpers/auth.ts." The agent reads the helper, applies the pattern. Eight minutes. 15,000 tokens.

That's not a synthetic benchmark. That happened on a real task with real time pressure.

The seven-patch disaster from the opening? That architectural lesson ("separate searchable content from raw storage, fix ingestion before search") is now a semantic memory in Engram. Next time an agent encounters degrading search quality, it recalls the pattern and checks the data profile before touching the search algorithm. The lesson was expensive. It only needed to be expensive once.

The behavior nobody programmed

Here's what I didn't expect.

After running the connected system for a few weeks, I noticed something I hadn't designed for. The agent started recalling its own past mistakes before writing any code. It would begin a task, call memory_recall with the task description, get back a procedural memory about a similar task where it had made a specific error, and adjust its approach before writing a single line.

It was using memory to prevent bugs it would have otherwise introduced.

Nobody programmed this. The consolidation cycles did it. Light sleep compressed raw sessions into digests. Deep sleep extracted procedural knowledge from those digests. The dream cycle found connections between tasks that happened weeks apart in different parts of the codebase. The decay pass pruned the noise. What was left was instinct: compressed, high-confidence knowledge that surfaced at the right moment without being explicitly asked for.

This is the difference between a system that stores information and a system that forms understanding. RAG retrieves documents. This forms something closer to instinct.

I kept watching for the effect to be a fluke, some artifact of a small sample size. After 30 tasks, the pattern held. The agent's token usage per task dropped. Its first-attempt success rate climbed. And the types of mistakes it made shifted from "didn't know the pattern" to "genuine edge case," which is exactly what you want.

When the memory backfired

I should be honest. The first time I connected these systems, the agent got slower. Not faster.

It was recalling too much. Every task triggered a flood of marginally relevant memories. A task about "fix the login page CSS" pulled back procedural memories about authentication security because both contained the word "login." The agent spent tokens processing irrelevant context, and worse, it started second-guessing correct approaches because some retrieved memory contradicted what it was about to do.

More memory is not better memory. I learned this the hard way.

The fix was in Engram's intent analysis layer. Queries get classified into intent types (TASK_START, DEBUGGING, RECALL_EXPLICIT, and eight others), and each type triggers a different retrieval strategy with different depth limits and memory type preferences. Tightening the TASK_START strategy (prefer procedural over semantic, limit association traversal to two hops, suppress low-confidence matches) brought the noise back under control.

Memory without intent classification is just a bigger haystack. The classification is what turns retrieval into recall.

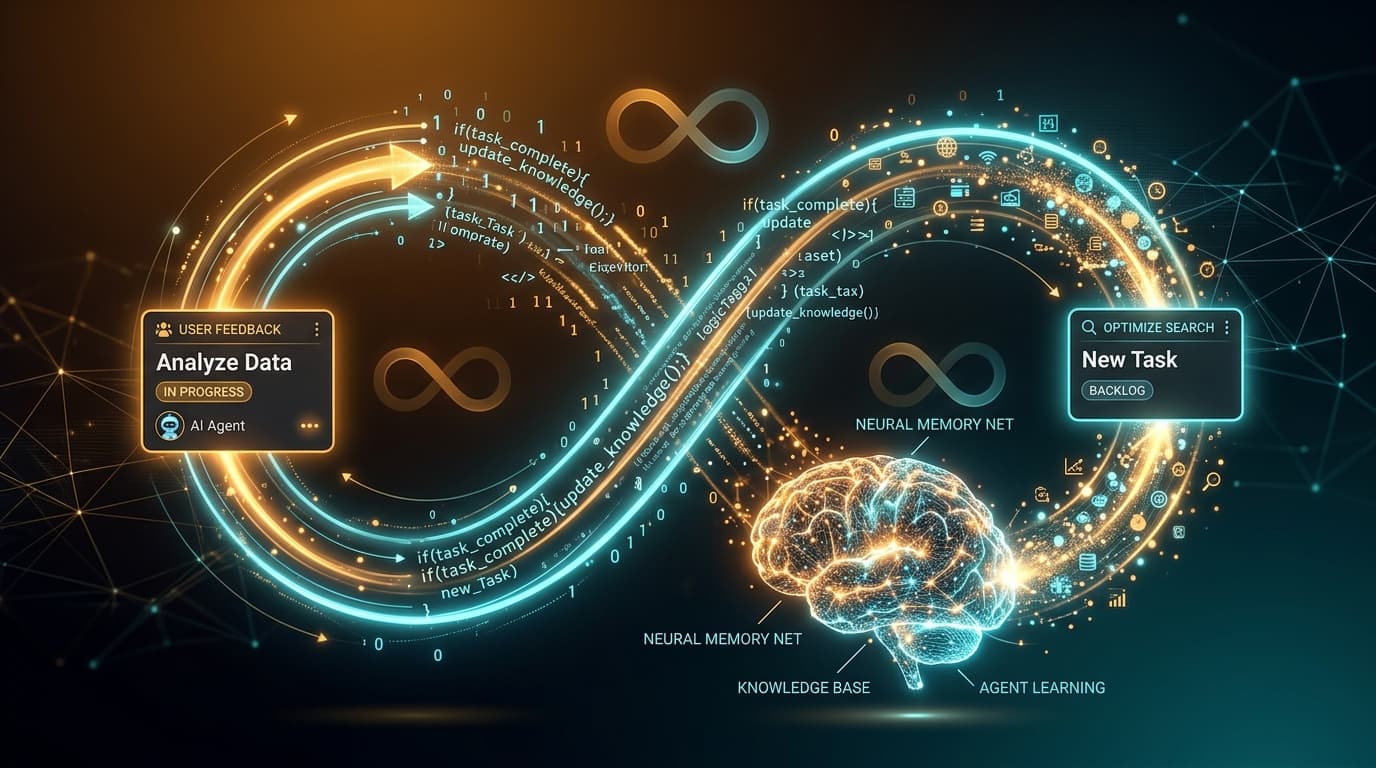

The loop

Here's the cycle, running end to end:

Card created on the kanban board. Agent assigned. Ouija dispatches. The agent's first action is memory_recall with the task context, pulling relevant procedural memories, semantic facts about the repo, and associative links to similar past work. The agent codes with context: it knows the testing conventions, the error handling preferences, the gotchas in this particular module. PR opens. Card advances. Telegram pings.

Between tasks, the agent ingests what it learned. Consolidation runs: sessions compress into digests, digests crystallize into durable semantic and procedural memories, the dream cycle discovers connections between unrelated tasks, decay prunes the noise.

Next card starts. The agent is slightly better than it was for the last one. Not in a vague "AI improves over time" way. In a "45 minutes became 8 minutes on the same class of problem" way.

Card to PR to memory to better card. That's the loop.

Both systems are open source

- Ouija (dispatch): github.com/muhammadkh4n/ouija

- Engram (memory): github.com/muhammadkh4n/engram

Each works independently. Ouija dispatches agents without memory. Engram gives memory to any agent framework via MCP. But together they close the loop that neither can close alone. And it's the loop that turned my agents from productive tools into something that actually learns.

Get new posts in your inbox

Architecture, performance, security. No spam.

Keep reading

I Compared Three AI Memory Systems. They Can't Even Agree on What Memory Means.

SimpleMem compresses conversations into atoms. MemPalace stores every word in a spatial hierarchy. Engram forgets on purpose. After a week with all three, I think they're solving different problems.

I Built an AI Memory System That Forgets on Purpose. It Remembers Better Than Yours.

My AI agent wrote a perfect architecture spec for dual-storage memory. Then it ignored the whole thing and built a flat table. Seven patches later, I threw it all away and built Engram from neuroscience papers instead.

AI Agents Can Write Code. Nobody Is Managing Them. I Built the Missing Layer.

Two of my AI agents opened conflicting PRs on the same repo. I caught it at 11 PM. That's when I realized the control plane was already sitting on my screen: the kanban board.