I Compared Three AI Memory Systems. They Can't Even Agree on What Memory Means.

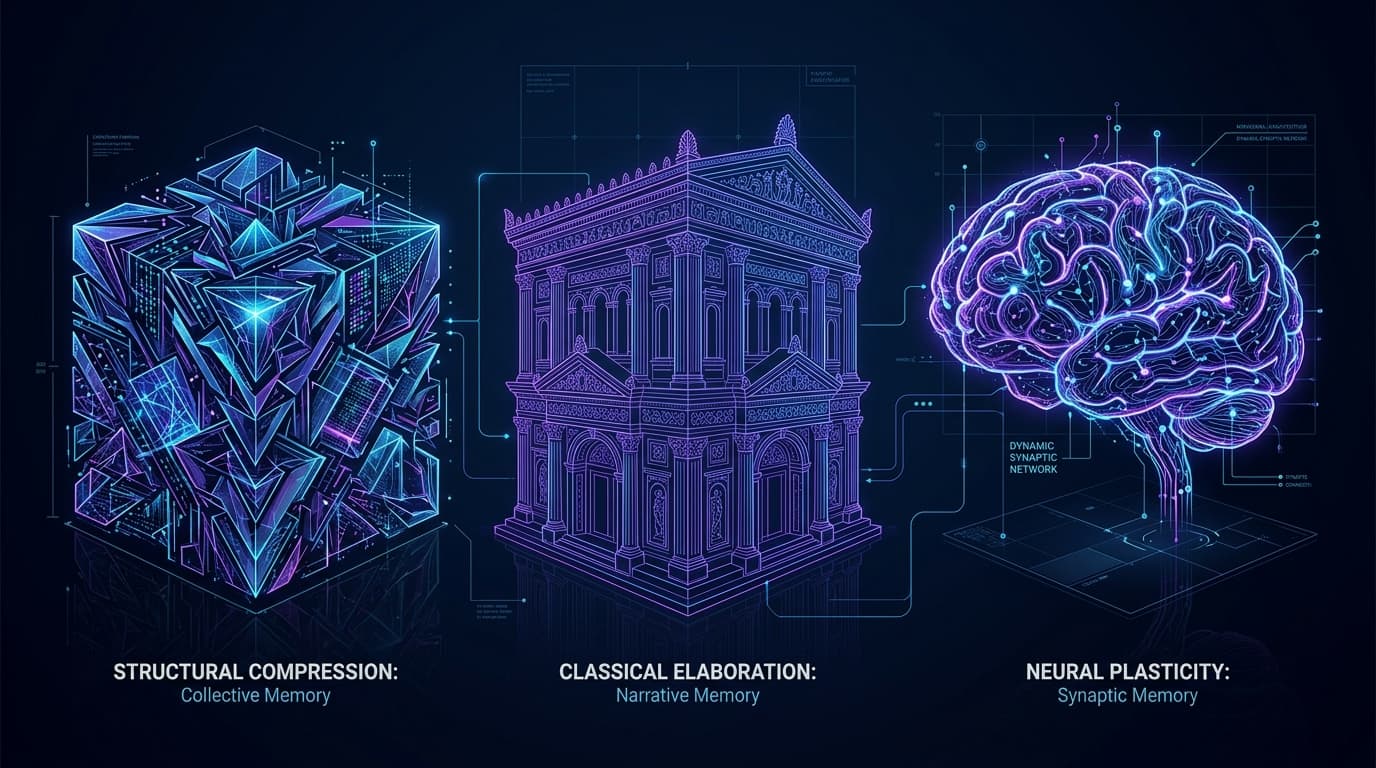

SimpleMem compresses conversations into atoms. MemPalace stores every word in a spatial hierarchy. Engram forgets on purpose. After a week with all three, I think they're solving different problems.

Three open-source projects. Same problem: AI agents forget everything between sessions. Three completely different answers to what "remembering" even means.

SimpleMem says compress it. MemPalace says store everything. I built Engram and said forget most of it.

After spending a week with all three, I'm convinced they're each solving a different problem while pretending to solve the same one. The AI memory space doesn't have a winner. It has three religions.

The Compressor: SimpleMem

SimpleMem comes out of UC Santa Cruz with an actual arXiv paper and reproducible benchmarks. The thesis: take raw conversation streams, break them into atomic memory units (self-contained facts with resolved coreferences and absolute timestamps), index with semantic embeddings, and retrieve by meaning.

A messy debugging conversation becomes two clean atoms: "Migration M-17 failed due to index ordering" and "ORM generates CREATE INDEX before CREATE TABLE in version 4.2." Independent. Timestamped. Searchable. The conversation itself gets tossed. Only the distilled facts survive.

The efficiency numbers are real. 43% F1 on LoCoMo-10, beating Mem0 by 26%. 531 tokens per query versus 16,000+ for naive context stuffing. They also just shipped Omni-SimpleMem for multimodal memory (text, image, audio, video), hitting new SOTA on both LoCoMo (0.613 F1, +47%) and Mem-Gallery (0.810 F1, +51%). The academic rigor is legit.

But here's what nags at me. When you decompose conversations into atomic facts, you lose the connections between them. "The migration failed" and "we reordered the steps" become two separate atoms. The causal link (we reordered BECAUSE it failed) is implied by timestamps but never explicitly modeled. There's no edge in a graph saying "this caused that."

For lookup ("what happened with migration M-17?"), atoms work fine. For reasoning ("we keep seeing ordering-related failures across different projects, what's the pattern?"), they're too isolated. You get facts without understanding. A library of index cards, perfectly organized, with no librarian who's read the books.

The Palace: MemPalace

MemPalace takes the opposite philosophy. Store everything. Every word. No AI deciding what matters. No compression. No extraction.

From their README: "Other systems let AI decide what's worth remembering. It extracts 'user prefers Postgres' and throws away the conversation where you explained WHY."

That's a legitimate critique. Lossy compression loses context. MemPalace's answer: keep the whole conversation, then make it navigable using a spatial metaphor borrowed from ancient Greek orators.

Wings (people and projects), Halls (memory types like facts, events, discoveries), Rooms (specific topics like "auth-migration"), Closets (summaries pointing to originals), Drawers (the verbatim files). When the same room appears in different wings, a "tunnel" cross-references them automatically. It's a spatial index over uncompressed text. Charming metaphor, practical implementation.

The numbers are striking. 96.6% R@5 on LongMemEval in raw mode. Zero API calls. Local ChromaDB. That's the highest published score on that benchmark, free or paid. When Milla Jovovich (yes, the Resident Evil actress) and Ben Sigman open-sourced it, the repo hit 7,000 stars in days.

I want to credit something specific here. When the community found problems within hours of launch (overstated AAAK compression claims, features implied as shipped that weren't wired in yet), Milla and Ben published an honest errata note within 48 hours. "We'd rather be right than impressive." They itemized exactly what was wrong, what was still true, and what they were fixing. That's rare in open source and I respect it.

But. MemPalace is built for retrieval, not learning. It answers "what did we say about X?" with near-perfect accuracy. It can't answer "what should I do differently next time?" because that requires synthesis across experiences, not recall of a single one. Five debugging sessions about race conditions sit in five separate drawers in five different rooms. There's no mechanism to extract the pattern across them into a general skill the agent can apply.

The palace metaphor is revealing in a way the authors might not intend. The ancient method of loci works because the human brain does the consolidation. You walk through the palace and your associative cortex fires connections between rooms. MemPalace has the palace. It doesn't have the cortex.

The Brain: Engram

Full disclosure: this one is mine. Adjust your skepticism accordingly.

Engram doesn't compete on the same axis. It doesn't try to compress more efficiently or store more completely. It models how biological memory actually works: multiple systems, consolidation cycles, and deliberate forgetting.

Five memory systems (sensory buffer, episodic, semantic, procedural, associative network with eight edge types). Four consolidation cycles (light sleep compresses episodes into digests, deep sleep extracts durable knowledge, dream cycle discovers connections across unrelated experiences, decay pass prunes low-confidence noise). Raw conversations flow in, get consolidated into increasingly abstract representations, and the noise fades while the signal strengthens.

The result: an agent that doesn't just remember what happened. It develops something closer to instinct. Three debugging sessions about race conditions consolidate into a procedural memory: "when middleware modifies the session object, check for race conditions." That's not a retrieved fact. It's a learned skill.

I built this because I hit a wall that neither compression nor hoarding could solve. 14,000 episodes in a database. 87% noise (heartbeats, tool calls, system metadata). SimpleMem's approach would have compressed the noise into smaller noise. MemPalace's approach would have organized the noise into beautifully labeled rooms full of noise. Neither addresses signal-to-noise. Forgetting does.

Where Each One Wins (Honestly)

SimpleMem wins on efficiency. 531 tokens per query is real money at scale. The benchmarks are reproducible, the arXiv paper is peer-citable, and Omni-SimpleMem's multimodal extension is genuinely ahead of the field. If your constraint is cost-per-query and you need academic validation, start here.

MemPalace wins on retrieval completeness. 96.6% R@5 with zero API calls is not a vanity metric. For use cases where you need to prove a specific conversation happened with specific words (legal, compliance, audit trails), the verbatim-everything approach is the correct one. The local-only, zero-cloud architecture is a real privacy advantage. And the knowledge graph with temporal validity windows is genuinely useful for team memory.

Engram wins when agents need to get smarter over time. When task 50 should be faster than task 1. When the same class of bug should be caught on the first attempt, not the fourth. When you want agents that develop instincts across sessions rather than building larger and larger indexes. The tradeoff is retrieval completeness. Engram will never hit 96.6% on LongMemEval because it deliberately forgets things. That's the point.

What None of Us Have Solved

Here's the part that keeps me up at night.

All three systems, mine included, are building memory for individual agents in single-user contexts. The real problem is harder: how do you build a system where a new agent on day one inherits six months of collective experience from agents that came before it?

Engram supports shared Supabase-backed stores. MemPalace has multi-user wings. SimpleMem has cloud-hosted MCP. But true institutional knowledge transfer between agents at scale, the kind where Agent B avoids a mistake because Agent A made it three weeks ago on a different project, nobody has cracked it. Not at production scale. Not with the reliability you'd need to actually trust it.

That's the frontier. Not better compression. Not bigger palaces. Not smarter consolidation. The question is whether the answer looks more like a library, a zip file, or a brain.

I'm betting on the brain. But I've been wrong before (see: seven patches on a broken architecture). The good news is all three projects are open source, so you can bet on whichever philosophy matches your problem.

- SimpleMem: github.com/aiming-lab/SimpleMem

- MemPalace: github.com/milla-jovovich/mempalace

- Engram: github.com/muhammadkh4n/engram

Get new posts in your inbox

Architecture, performance, security. No spam.

Keep reading

I Closed the AI Agent Loop. They Stopped Making the Same Mistakes.

My AI agent applied seven patches to the same bug. Each one a fresh attempt. No accumulation. After connecting dispatch with memory, the same class of bug gets caught on the first try.

I Built an AI Memory System That Forgets on Purpose. It Remembers Better Than Yours.

My AI agent wrote a perfect architecture spec for dual-storage memory. Then it ignored the whole thing and built a flat table. Seven patches later, I threw it all away and built Engram from neuroscience papers instead.

AI Agents Can Write Code. Nobody Is Managing Them. I Built the Missing Layer.

Two of my AI agents opened conflicting PRs on the same repo. I caught it at 11 PM. That's when I realized the control plane was already sitting on my screen: the kanban board.